Tired of looking up Danbooru tags every time you want to generate an anime image in ComfyUI? Same.

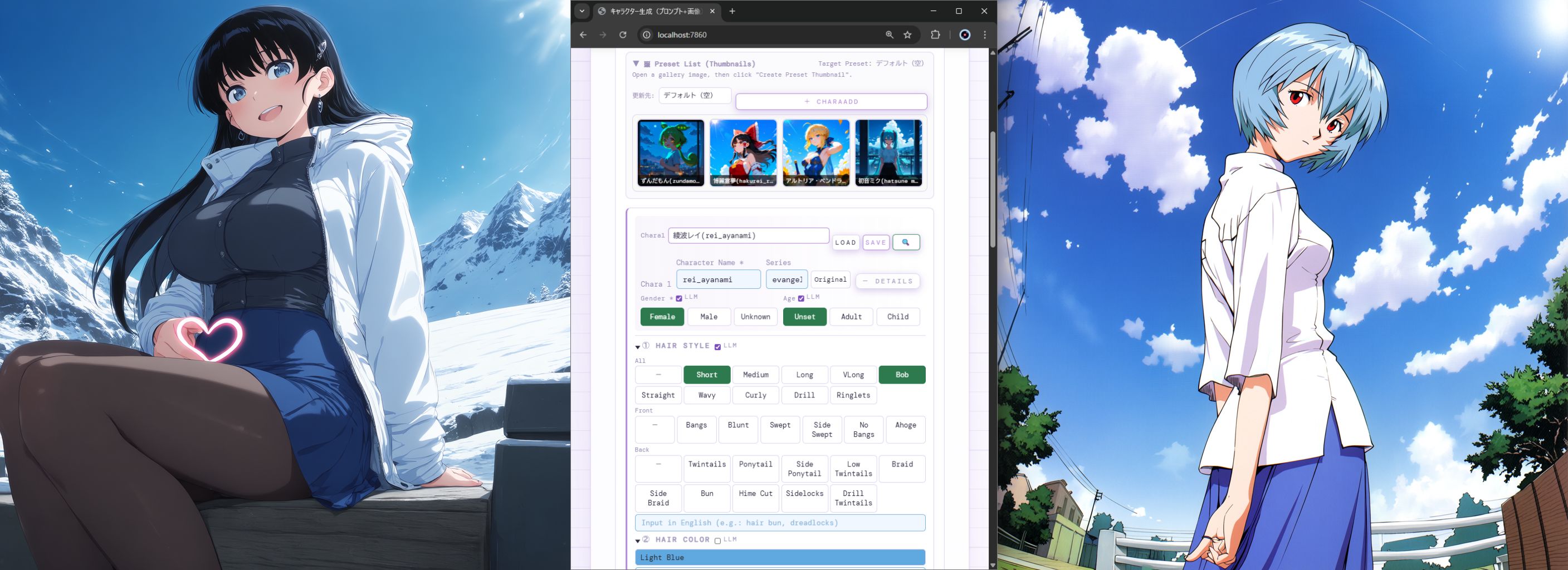

I built anima_pipeline — a local browser UI that sits between you and your ComfyUI Anima workflow. Select character details by clicking buttons, optionally let an LLM look up the Danbooru tags for you, and hit Generate. That's it.

→ GitHub (free, open source): https://tomotto1296.github.io/anima-pipeline/index_en.html

What it does

Point-and-click prompt building Select hair style, hair color, eye color, expression, outfit, pose and more from buttons. The Danbooru prompt assembles itself — no hand-typing tags.

Optional LLM for character lookup Type a character name (works with Japanese names too, e.g. "博麗霊夢") and the LLM auto-generates the Danbooru tags. Supports:

LM Studio — fully local, free, no limits (needs decent GPU)

Gemini free tier — easiest to set up, no credit card, 250 req/day free

Any OpenAI-compatible API

No LLM? No problem — just type English tags directly. Faster anyway.

Multi-character Configure up to 6 characters simultaneously in separate blocks. Great for duo/group shots.

LoRA injection Card grid UI with thumbnail preview. Up to 4 slots. Auto-fetches your ComfyUI LoRA list.

Character presets Save/load full character configs. Auto-generate presets from a character name using Danbooru Wiki + LLM — fills in hair color, eye color, outfit automatically.

Workflow switching Drop any ComfyUI API-format workflow JSON into the workflows/ folder and select it from the dropdown. Node IDs auto-detected.

Session auto-save Picks up exactly where you left off on next launch.

Mobile support Works from phone/tablet on the same Wi-Fi.

Requirements

ComfyUI 0.16.4+ with Anima workflow (grab from ComfyUI's Browse Templates → Anima → Save API Format)

Anima model files from Hugging Face: https://huggingface.co/circlestone-labs/Anima/tree/main/split_files

qwen_3_06b_base.safetensors→ComfyUI/models/text_encoders/qwen_image_vae.safetensors→ComfyUI/models/vae/anima-preview.safetensors→ComfyUI/models/diffusion_models/

Python 3.10+

LLM — optional

Setup (5 min)

Download ZIP from GitHub and extract

Get Anima workflow JSON from ComfyUI → Save (API Format) → place in

workflows/Double-click

start_anima_pipeline.bat(Windows) or runpython anima_pipeline.pyOpen

http://localhost:7860Open Settings → set ComfyUI URL → Save. Done.

For LLM: select platform in Settings → enter API key (Gemini) or URL (LM Studio) → Save.

Troubleshooting

Problem Fix Can't connect to ComfyUI Check URL / make sure ComfyUI is running LLM errors Re-check API key / Gemini free tier: 250 req/day LoRA thumbnails not showing Set ComfyUI output folder as absolute path in Settings Wrong Node IDs Open your workflow JSON and verify node IDs manually

Latest update – v1.5.01 (2026-03-23)

Database-driven generation history + re-editing workflow based on history

Hierarchical presets (character / scene / camera / quality / LORA / composite)

Ability to save multiple named sessions

Setup self-diagnostic UI (/diagnostics)

Support for separate Japanese and English input for character and project names

Automatic configuration completion and stabilization at startup

v1.4.7 (2026-03-20)

Japanese/English UI toggle (auto-detects OS language)

Log system with API key masking + ZIP export for easy bug reports

Character preset thumbnail gallery with lazy loading

Various bug fixes and stability improvements

Full changelog: https://github.com/tomotto1296/anima-pipeline/releases/tag/v1.4.7

Questions and feedback welcome — drop a comment or open an issue on GitHub.

Follow for updates: @RHU_AIstudio on X