How to Train Icons

Firstly, we need to prepare a dataset for icons with the following requirements:

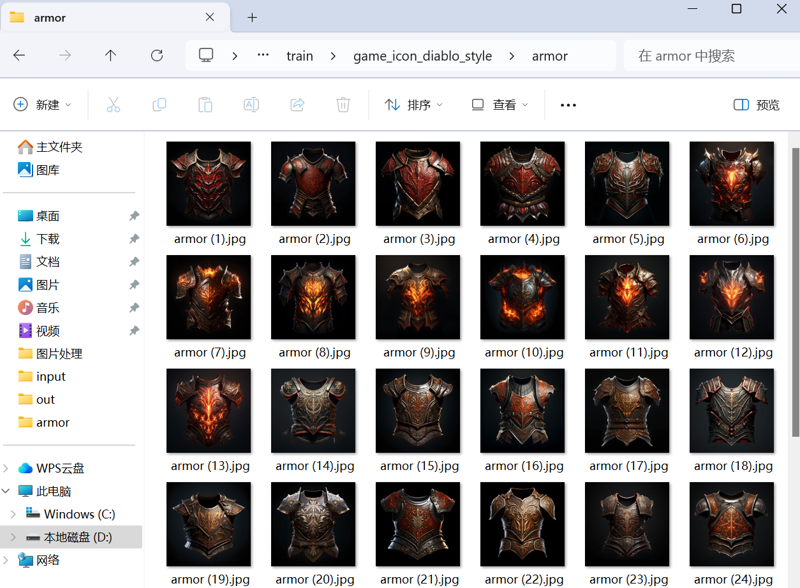

Resolution should be at least 1024 pixels and very clear.

Ensure a consistent artistic style.

Include a variety of people and objects as much as possible.

Here is a reference dataset of icons: https://huggingface.co/datasets/ErhaChen/game_icon_diablo_style

Here is the link to the model:

https://civitai.com/models/209703/sdxlgame-icon-or-diablo-style-or-dataset

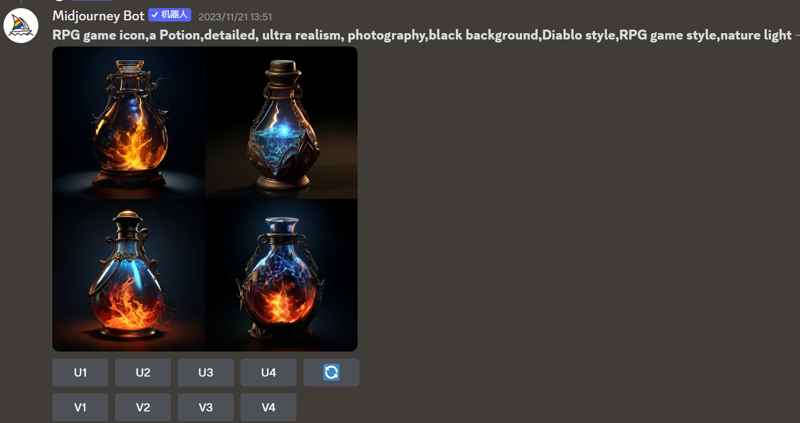

If you don't have suitable icon image materials, you can use Midjourney to generate them. Just like this:

We need to generate various types of icons, including but not limited to: one-handed swords, great swords, daggers, shields, wands, armor, leggings, boots, rings, necklaces, blood vials, and so on.Don't forget to upscale the images.

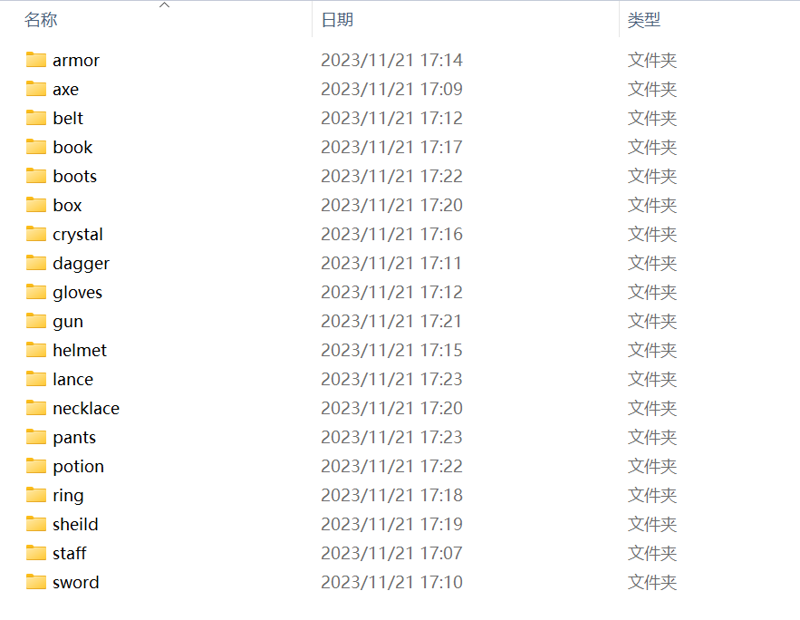

Next, we need to categorize these icons into folders, like this:

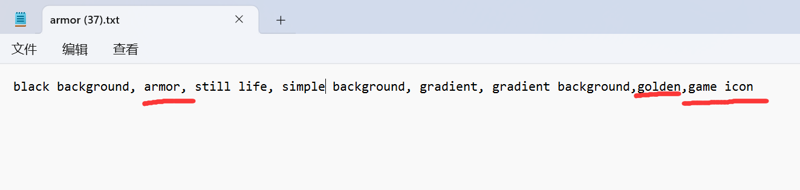

Next, we need to annotate the images, and you can use the WD14-tagger for annotation. However, it's important to note that the WD14-tagger's recognition of icons is highly inaccurate. It may misclassify items of different categories as the same item. For instance, it might identify swords, axes, and wands all as sword. This would undoubtedly lead to training failure.

So, we need to review the annotation files. Here, I recommend that everyone categorize the icons and place them into their respective folders.Then, assign a unique identifier and trigger word to each category of icons. For example, label all swords as "sword,game icon" and all boots as "boots,game icon," and so on.Just like this:

During training, you can place all the images and annotation files into the same folder.

Now, you can proceed with training. The training parameters are as follows:

device : 4090

instant prompt : game icon

class prompt : style

image count : 661

batch size : 5

repeats : 20

batches per epoch : 2644

epoch : 10

clip_skip : 1

learning_rate : 0.0012,

text_encoder_lr : 0.0012,

unet_lr : 0.0012,

max_resolution : 1024,1024 ,

min_bucket_reso : 256,

max_bucket_reso : 2048,

network_dim : 128,

network_alpha : 1,