This node was designed to help AI image creators to generate prompts for human portraits.

Install

To install comfyui-portrait-master:

open the terminal on the ComfyUI installation folder

digit:

cd custom_nodesdigit:

git clone https://github.com/florestefano1975/comfyui-portrait-masterrestart ComfyUI

We recommend the use of ComfyUI Manager to install the additional custom nodes needed for the workflow.

Update

To update comfyui-portrait-master:

open the terminal on the ComfyUI installation folder

digit:

cd custom_nodesdigit:

cd comfyui-portrait-masterdigit:

git pullrestart ComfyUI

Warning: update command overwrites files modified and customized by users.

Available Options

shot: sets the shot type

shot_weight: coefficient (weight) of the shot type

gender: sets the character's gender

androgynous: coefficient (weight) to change the genetic appearance of the character

nationality_1: sets first ethnicity

nationality_2: sets second ethnicity

nationality_mix: controls the mix between nationality_1 and nationality_2, according to the syntax [nationality_1: nationality_2: nationality_mix]. This syntax is not natively recognized by ComfyUI; we therefore recommend the use of comfyui-prompt-control. This feature is still being tested

eyes_color: set the eyes color

hair_color: set the hair color

facial_expression / facial_expression_weight: apply and adjust character's expression

face_shape / face_shape_weight: apply and adjust the face shape

facial_asymmetry: coefficient (weight) to set the asymmetry of the face

hairs_style: hairstyle selector

disheveled: coefficient (weight) of the disheveled effect

age: the age of the subject portrayed

natural_skin: coefficient (weight) for control the natural aspect of the skin

skin_details: coefficient (weight) of the skin detail

skin_pores: coefficient (weight) of the skin pores

dimples: coefficient (weight) for controlling facial dimples

freckles: coefficient (weight) of the freckles

moles: coefficient (weight) for the presence of moles on the skin

skin_imperfections: coefficient (weight) to introduce skin imperfections

eyes_details: coefficient (weight) for the general detail of the eyes

iris_details: coefficient (weight) for the iris detail

circular_iris: coefficient (weight) to increase or force the circular shape of the iris

circular_pupil: coefficient (weight) to increase or force the circular shape of the pupil

light_type: set global illumination

light_direction: set the direction of the light. This feature is still being tested

photorealism_improvement: experimental option to improve photorealism and the final result

prompt_start: portion of the prompt that is inserted at the beginning

prompt_additional: portion of the prompt that is inserted at an intermediate point

prompt_end: portion of the prompt that is inserted at the end

negative_prompt: the negative prompt has been integrated into the node to be adequately controlled depending on the settings

style_1 / style_1_weight: apply and adjust the first style

style_1 / style_1_weight: apply and adjust the second style

Parameters with null value (-) or set to 0.00 would be not included in the prompt generated.

The node generates two output string, postive and negative prompt.

Customizations

The lists subfolder contains the .txt files that generate the lists for some node options. You can open files and customize voices.

Workflow

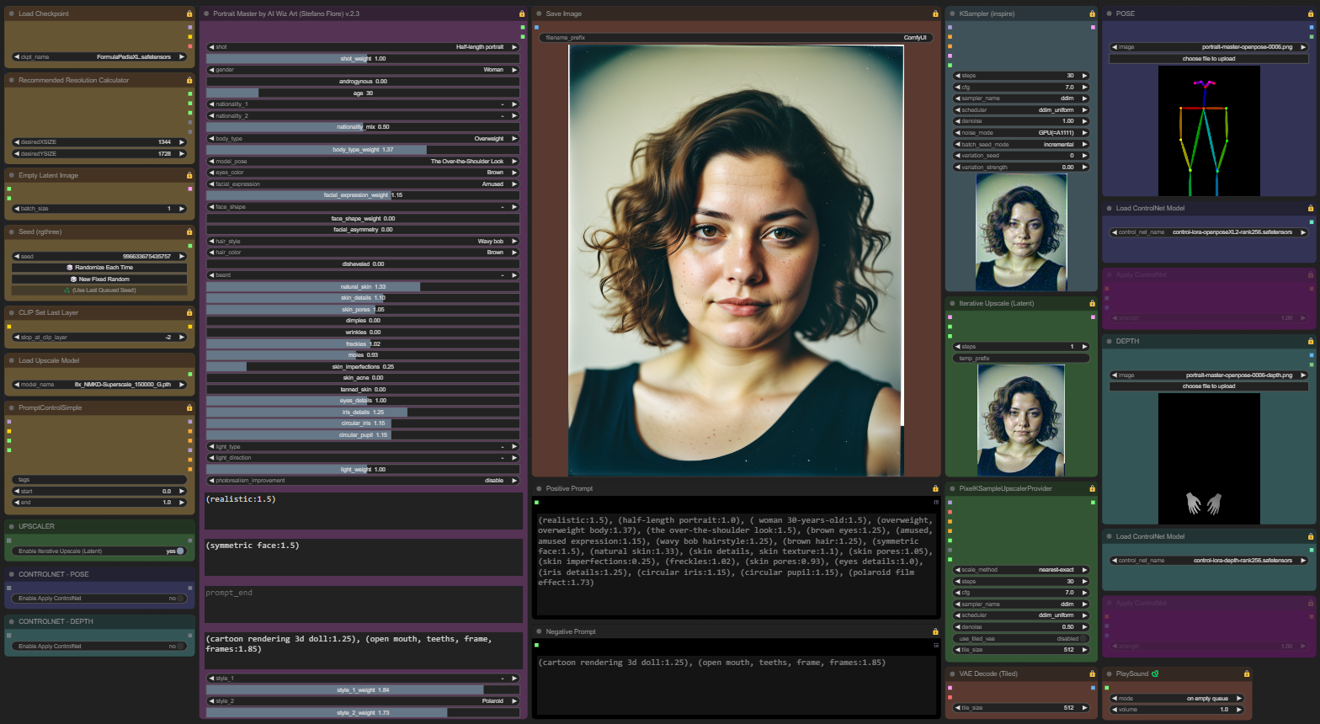

The portrait-master-controlnet2x-workflow.json file contains the workflow designed to work properly with Portrait Master.

An upscaler and 2 ControlNet have been integrated to manage the pose of the characters. I inserted 3 switches to disable the upscaler and control if necessary. The coloring of the nodes will help you understand how the switches affect the workflow.

For the correct functioning of ControlNet with SDXL checkpoints, download this files:

and copy it into the ./models/controlnet/ folder of ComfyUI. Other similar files for ControlNet are available at this link.

There are some files that can be used with ControlNet in the Portrait Master openpose folder. To generate other poses use the free portal https://openposeai.com/

There are some sample files in the openpose folder for use with ControlNet nodes.

Workflow performances

The workflow is designed to obtain the right balance between quality and generative performance. You can change the settings of the two KSamplers to adapt them to your needs.

Tested on Google Colab, the workflow generates a high-resolution image in 60 seconds with V100 GPU and in 30 seconds with A100 GPU.

Model Pose Library

The model_pose option allows you to use a list of default poses. You need to disable ControlNet in this case and adjust framing with the shot option.

Practical advice

Using high values for the skin and eye detail control parameters may override the setting for the chosen shot. In this case it is advisable to reduce the parameter values for the skin and eyes, or insert in the negative prompt (closeup, close up, close-up:1.5), modifying the weight as needed.

For total control of the pose, use the ControlNet nodes integrated into the workflow, setting the shot parameter to null (-).

Optimal use of prompt fields

prompt_start: specify the type of image you want, for example realistic.

prompt_additional: its content is inserted between promot_start and the part of the prompt automatically generated by the node; specify clothing and other specific characteristics of the character; possibly also the setting or background.

prompt_end: in this field enter other requests to the AI, but taking into account that they are minor compared to the rest of the instructions; for example, you can move the background description or environment here. This field is not required, so you can ignore it.

negative_prompt: it works as usual, it allows you to declare what you don't want in the image.

Examples

In the future, several example images in PNG format will be uploaded to the examples folder, which can be uploaded to ComfyUI to use their settings.

SDXL Turbo

ComfyUI Portrait Master also works correctly with SDXL Turbo.

Notes

When the generation of an image is started in the console you can read the complete prompt created by the node.

The effectiveness of the parameters depends on the quality of the checkpoint used.

For advanced photorealism we recommend FormulaXL 2.0.