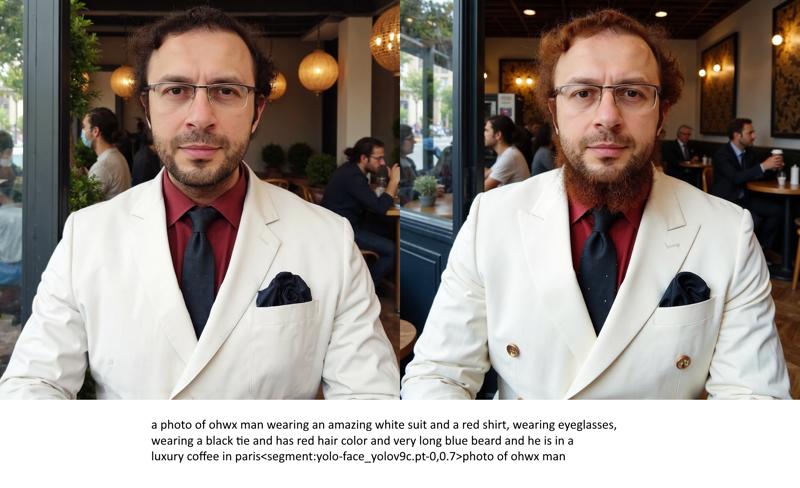

Full Fine Tuning of FLUX yields way better results than LoRA training as expected, overfitting and bleeding reduced a lot, check oldest comment for more information, images LoRA vs Fine Tuned full checkpoint

Configs and Full Experiments

Configs and Full Experiments

Full configs and grid files shared here :

Details

I am still rigorously testing different hyperparameters and comparing impact of each one to find the best workflow

So far done 16 different full trainings and completing 8 more at the moment

I am using my poor overfit 15 images dataset for experimentation (4th image)

I have already proven that when I use a better dataset it becomes many times betters and generate expressions perfectly

Here example case : https://www.reddit.com/r/FluxAI/comments/1ffz9uc/tried_expressions_with_flux_lora_training_with_my/

Conclusions

When the results are analyzed, Fine Tuning is way lesser overfit and more generalized and better quality

In first 2 images, it is able to change hair color and add beard much better, means lesser overfit

In the third image, you will notice that the armor is much better, thus lesser overfit

I noticed that the environment and clothings are much lesser overfit and better quality

Disadvantages

Kohya still doesn't have FP8 training, thus 24 GB GPUs gets a huge speed drop

Moreover, 48 GB GPUs has to use Fused Back Pass optimization, thus have some speed drop

16 GB GPUs gets way more aggressive speed drop due to lack of FP8

Clip-L and T5 trainings still not supported

Speeds

Rank 1 Fast Config - uses 27.5 GB VRAM, 6.28 second / it (LoRA is 4.85 second / it)

Rank 1 Slower Config - uses 23.1 GB VRAM, 14.12 second / it (LoRA is 4.85 second / it)

Rank 1 Slowest Config - uses 15.5 GB VRAM, 39 second / it (LoRA is 6.05 second / it)

Final Info

Saved checkpoints are FP16 and thus 23.8 GB (no Clip-L or T5 trained)

According to the Kohya, applied optimizations doesn't change quality so all configs are ranked as Rank 1 at the moment

I am still testing whether these optimizations make any impact on quality or not

I am still trying to find improved hyper parameters

All trainings are done at 1024x1024, thus reducing resolution would improve speed, reduce VRAM, but also reduce quality

Hopefully when FP8 training arrived I think even 12 GB will be able to fully fine tune very well with good speeds