Previously, all models and images posted on this account were trained and inferred using the HCP-Diffusion framework. However, as the HCP framework did not initially support the highres fix feature on the a1111's webui, all images posted on Civitai before were directly generated. This meant no highres fix, no adetailer, no controlnet, NO ANYTHING for us.

Recently, with the addition of the workflow feature to the HCP framework, we were finally able to generate high-resolution images with highres fix. To our surprise, many quality issues reported earlier with most models disappeared immediately when highres fix was applied. We conducted three simple comparisons using the MeinaMix V11 as the base model.

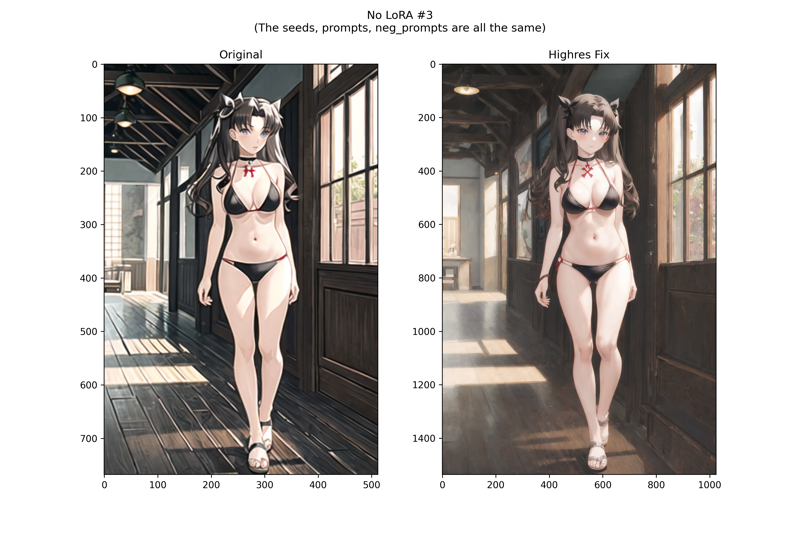

First, the comparisons without using LoRA:

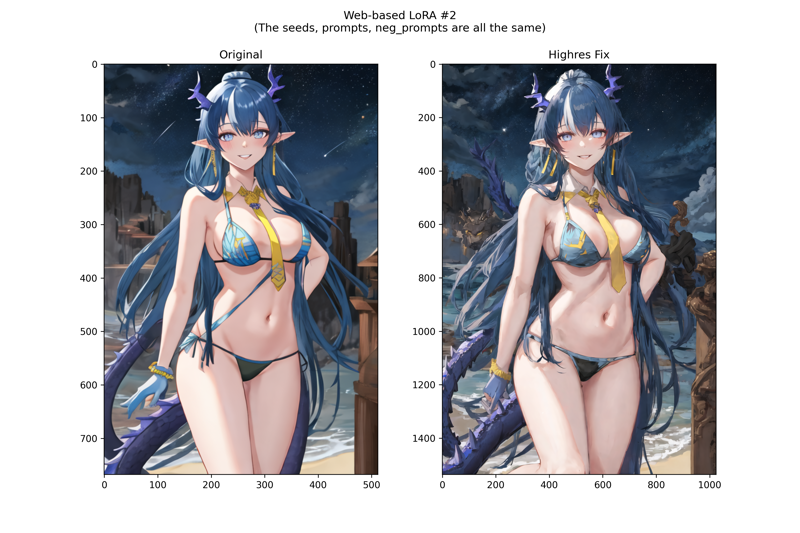

Next, the Web-based LoRA comparisons (LoRA link: https://civitai.com/models/125734?modelVersionId=153427, feel free to try it):

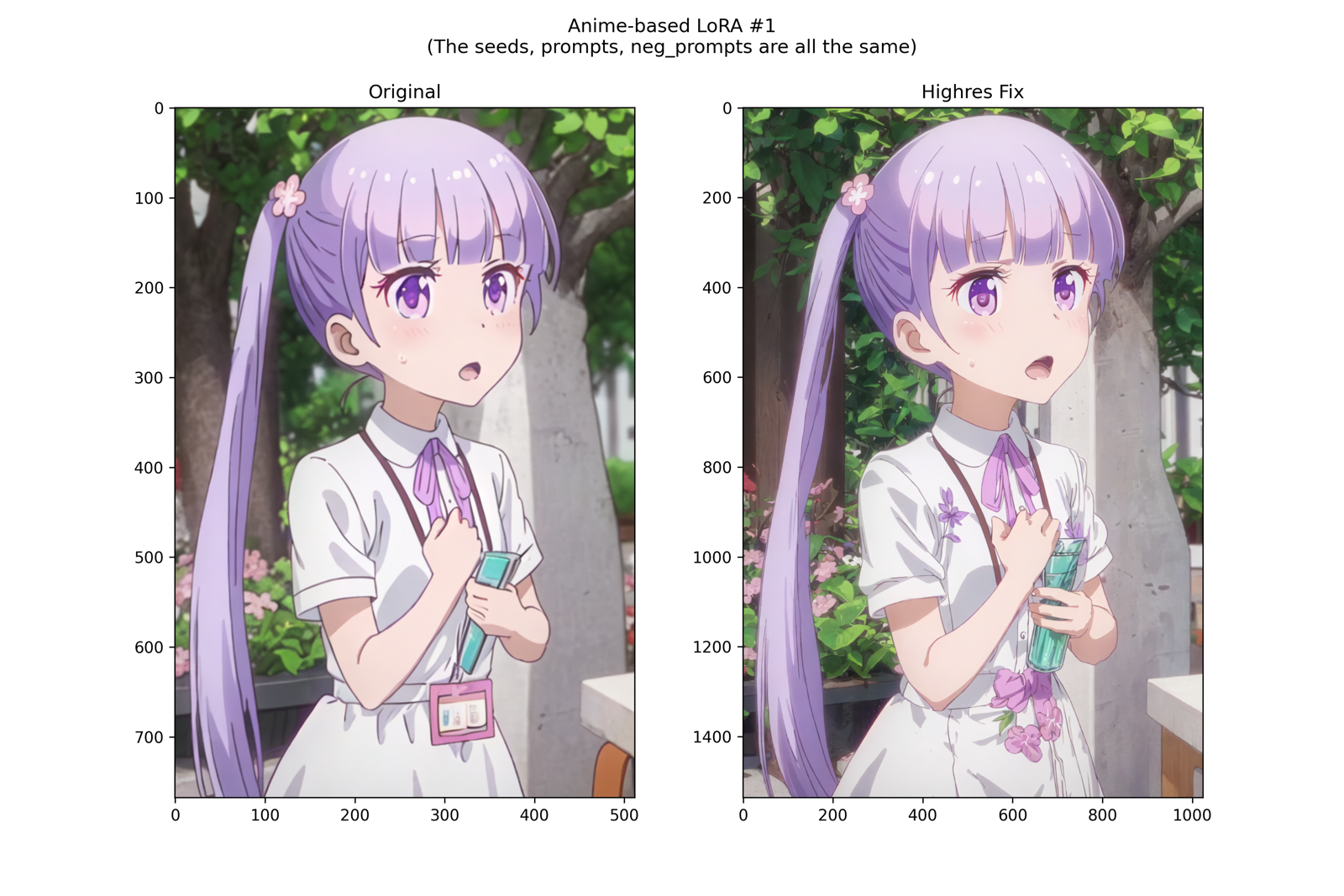

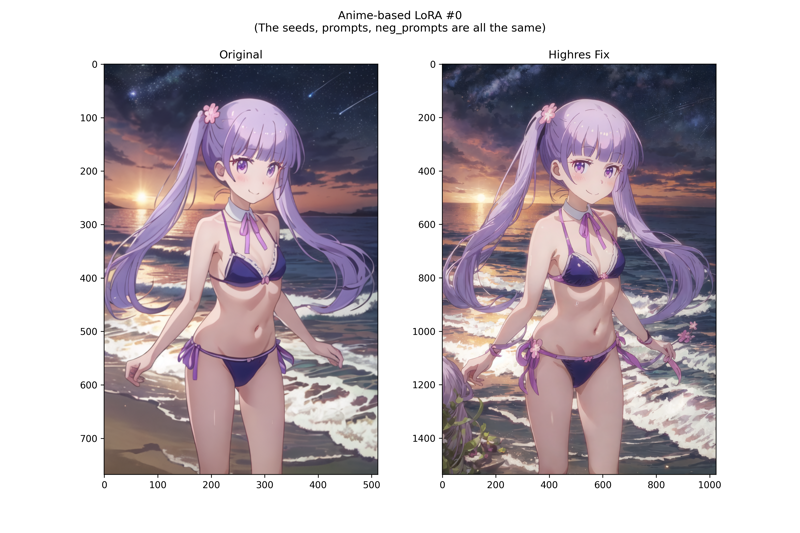

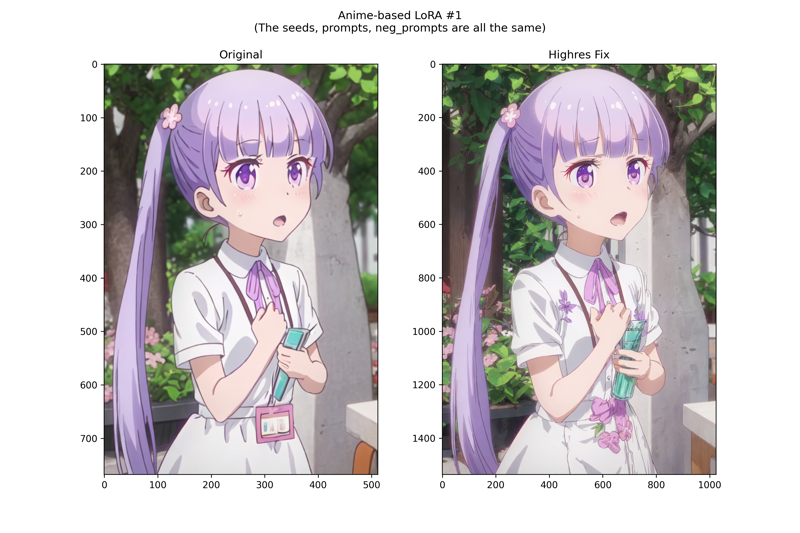

Then, the Anime-based LoRA comparisons (LoRA link: https://civitai.com/models/227219?modelVersionId=256330, feel free to try it):

In conclusion, the results are quite clear. In fact, we first noticed this possibility when other Civitai model creators (special thanks to @wiz_ and @AsaTyr and many others) achieved significantly better results than our images using the same dataset without any modification or filtering and training under the exactly same conditions. After discovering this, they generate images using our models, and they produced significantly better-quality images than ours as well. After thorough confirmation of every detail in the entire process, we found the key to the problem lies in this seemingly inconspicuous highres fix. As expected, the improvement effect of highres on image generation is quite significant.

This indirectly reveals a few interesting facts:

We have been competing on the same stage with images of mediocre quality generated by scripts and images of the highest quality generated by other users manually. Despite this, we achieved decent results.

Some users' evaluations of our model's quality were entirely based on preview images—meaning a significant portion of them may not have actually run the model but concluded that the model's quality is poor. Otherwise, they would have definitely noticed the significant improvement brought by highres. This is similar to publishing a paper on a clinical trial of myocardial stem cells, including a lot of experimental data, claiming to have saved many patients; then the next day, it turns out myocardial stem cells don't actually exist and the paper is entirely fabricated, equally amusing.

It's an interesting discovery, and we have to admit the importance of the cover for models. So, perhaps we will indeed consider using a preview image generation process with overall higher quality in the v1.5 version of the automated pipeline.